I had to come up with a way to setup some virtual studio style conferencing for a virtual event. I have experience with OBS Studio but was required to have a sound track running before the stream. Now having learned a bit about broadcast video I wasn't sure how I was going to setup the sound track as OBS doesn't really have a way of playing an audio file to another interface your not listening to.

So to accomplish this I initially used a griffin iMic and set the input to line in. Unfortunately because of the time constraints I must have had a bad cable or connection input setup that hooked up to a phone's headphone jack but the sound on it wasn't very good and could be better. Instead of troubleshooting the cable, software inputs etc, I knew there were some virtual audio input software that could route songs, and input from other software like skype.

Eventually I came across Voice Meeter Banana an advanced virtual mixing console able to manage 5 audio inputs (3

physicals and 2 virtual) and 5 audio outputs (3 physicals and 2 virtual) through 5 multichannel

busses (A1, A2, A3 & B1, B2). Eventually I will probably get the virtual audio channeling setup properly to have an application or browser setup on it's own input but for right now I just need quick, dirty and it has to work.

I did a youtube video about some OBS Basics which can be viewed on my youtube channel.

You will need to have OBS Studio installed and Voicemeeter Banana

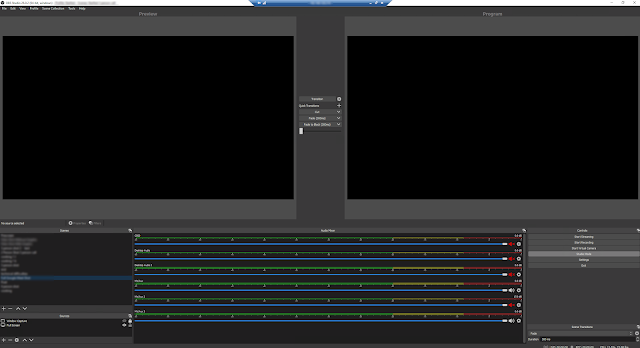

The setup for piping the audio is simple. The audio cassette in the picture below can play audio files in this case I have an mp3. B1 is the Voicemeeter VAIO digital input which is also B1 which is lite up next to the audio cassette.

The Hardware out A2 is set as the Voice Meeter Input which we will set as Mic/Aux 3 in OBS Studio as shown below. This allows us to play the audio we require with an option of listening to it or not though our headphones on the computer speakers (which is set to Yeti Stereo Microphone).

To hear what your playing on on Mic/Aux3 you need to have audio monitoring set to monitor and output. This will put play the audio on your primary computer speakers which is the Yeti Mic as we had stated before. However if you continue to play the audio you can set audio monitoring for Mic/Aux3 to Monitor off.

Your audio will continue to play and it will stream out unless you mute the audio on the output in OBS Studio which is shown by the speaker being either white or red. The Red Speaker means your audio is muted while the white means that it is being passed through to the stream. So in the image below Mic/Aux3 and Mic/Aux are live and will be heard in the stream while Desktop Audio, Desktop Audio 2 and Mic/Aux2 are muted and will not be heard.

To do the studio style interview setup I am using Google Meet but anything will work, skype, gotomeeting, zoom, and using window or display capture and the crop filter I crop the window where they are speaking so they fit in the 1920x1080 canvas.

The Google Meet call looks like this with 3 tiles and the layout is set to 6 tiles manually as at most you will have 3 users on screen.

The shots shown in green are the filter when there are 2 people on screen the red shows the crop filter set to when there are 3 people on screen. Below I've distorted the images but you can see where the users are in the silhouette. When they login to the google meeting you get them positioned and ask them not to move to much. In the case of the framing I chose to setup I asked them to try stay in the middle of their camera and used OBS to scale to fit the rest of the frame.

the 2 person shot

3 person shot

After some thought, I thought I would try a new layout for OBS where I would layout the guest in a 16:9 format, because the guess were having a hard time staying in frame, so in OBS studio I used the display capture and the crop filter to setup video feeds from your meeting app of choice (in this case it happens to be google meet, but can be skype, zoom, teams, etc)

the blue outline is the crop from OBS Studio and with a live camera feed you get a result with a graphical background as shown below. The top image is from an onsite camera with lighting and the other 2 are the cropped display from google meet.Then you can have on display a computer screen video, etc, in this case it is an interview from google meet, where we just have the interviewer and the guest. This gives more room for the interviewer to move and keeps them from getting cut off because of such type framing going with the full screen view as shown above.Depending on the setup you can also have different frame this below is done with a camcorder and a HDMI to USB capture card, with 2 point lighting. OBS will let you make groups and put graphics and text as shown below.

I put a graphic on the left and put a tight shot which was scaled from the wide shot from the shot above.

Audio quality is really important and depends if they have a microphone; most of the time they are using the phone or built in mic on the laptop and adjustments must be made with speakers so you don't get feedback from hearing your self through their speakers and back through their mic. To prep it up takes about 30 minutes, for placement and audio. For people doing this I would recommend they ask people wear earphones and get an irig mic or something similar. It makes a big difference in the sound.

It is difficult to keep people from moving but this setup does work pretty well, to see some samples checkout the STARFest - St. Albert Readers Festival videos.